On the evening of May 31, 2009, 216 passengers, three pilots, and nine flight attendants boarded an Airbus 330 in Rio de Janeiro. This flight, Air France 447, was headed across the Atlantic to Paris. The take-off was unremarkable. The plane reached a cruising altitude of 35,000 feet. The passengers read and watched movies and slept. Everything proceeded normally for several hours. Then, with no communication to the ground or air traffic control, flight 447 suddenly disappeared.

Days later, several bodies and some pieces of the plane were found floating in the Atlantic Ocean. But it would be two more years before most of the wreckage was recovered from the ocean’s depths. All 228 people on board had died. The cockpit voice recorder and the flight data recorders, however, were intact, and these recordings told a story about how Flight 447 ended up in the bottom of the Atlantic.

The story they told was about what happened when the automated system flying the plane suddenly shut off, and the pilots were left surprised, confused, and ultimately unable to fly their own plane.

The first so-called “auto-pilot” was invented by the Sperry Corporation in 1912. It allowed the plane to fly straight and level without the pilot’s intervention. In the 1950s the autopilots improved and could be programmed to follow a route.

By the 1970s, even complex electrical systems and hydraulic systems were automated, and studies were showing that most accidents were caused not by mechanical error, but by human error. These findings prompted the French company Airbus to develop safer planes that used even more advanced automation.

Airbus set out to design what they hoped would be the safest plane yet—a plane that even the worst pilots could fly with ease. Bernard Ziegler, senior vice president for engineering at Airbus, famously said that he was building an airplane that even his concierge would be able to fly.

Not only did Ziegler’s plane have auto-pilot, but it also had what’s called a “fly-by-wire” system. Whereas autopilot just does what a pilot tells it to do, fly-by-wire is a computer-based control system that can interpret what the pilot wants to do and then execute the command smoothly and safely. For example, if the pilot pulls back on his or her control stick, the fly-by-wire system will understand that the pilot wants to pitch the plane up, and then will do it at the just the right angle and rate.

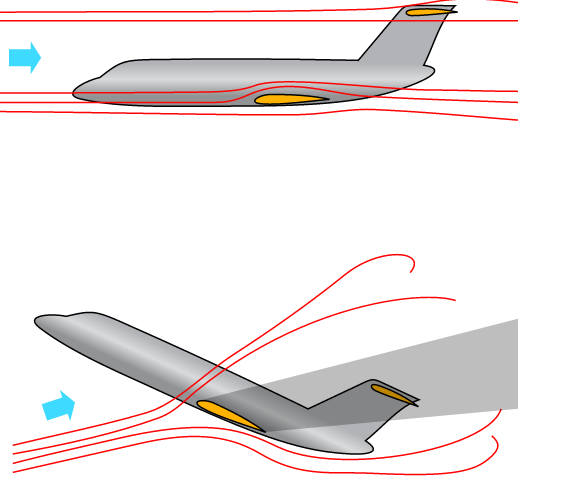

Importantly, the fly-by-wire system will also protect the plane from getting into an “aerodynamic stall.” In a plane, stalling can happen when the nose of the plane is pitched up at too steep an angle. This can cause the plane to lose “lift” and start to descend.

Stalling in a plane can be dangerous, but fly-by-wire automation makes it impossible to do. As long as it’s on.

Unlike autopilot, the “fly-by-wire” system cannot be turned on and off by the pilot. However, it can turn itself off. And that’s exactly what it did on May 31, 2009, as Air France Flight 447 made its transatlantic flight.

When a pressure probe on the outside of the plane iced over, the automation could no longer tell how fast the plane was going, and the autopilot disengaged. The “fly-by-wire” system also switched into a mode in which it was no longer offering protections against an aerodynamic stall. When the autopilot disengaged, the co-pilot in the right seat put his hand on the control stick—a little joystick-like thing to his right—and pulled it back, pitching the nose of the plane up.

This action caused the plane to go into a stall, and yet, even as the stall warning sounded, none of the pilots could figure out what was happening to them. If they’d realized they were in a stall, the fix would have been clear. “The recovery would have required them to put the nose down, get it below the horizon, regain a flying speed and then pull out of the ensuing dive,” says William Langewiesche, a journalist and former pilot who wrote about the crash of Flight 447 for Vanity Fair.

The pilots, however, never tried to recover, because they never seemed to realize they were in a stall. Four minutes and twenty seconds after the incident began, the plane pancaked into the Atlantic, instantly killing all 228 people on board.

There are various factors that contributed to the crash of flight 447. Some people point to the fact that the airbus control sticks do not move in unison, so the pilot in the left seat would not have felt the pilot in the right seat pull back on his stick, the maneuver that ultimately pitched the plane into a dangerous angle. But even if you concede this potential design flaw, it still begs the question, how could the pilots have a computer yelling ‘stall’ at them, and not realize they were in a stall?

It’s clear that automation played a role in this accident, though there is some disagreement about what kind of role it played. Maybe it was a badly designed system that confused the pilots, or maybe years of depending on automation had left the pilots unprepared to take over the controls.

“For however much automation has helped the airline passenger by increasing safety it has had some negative consequences,” says Langewiesche. “In this case, it’s quite clear that these pilots had had experience stripped away from them for years.” The Captain of the Air France flight had logged 346 hours of flying over the past six months. But within those six months, there were only about four hours in which he was actually in control of an airplane—just the take-offs and landings. The rest of the time, auto-pilot was flying the plane. Langewiesche believes this lack of experience left the pilots unprepared to do their jobs.

Complex and confusing automated systems may also have contributed to the crash. When one of the co-pilots hauled back on his stick, he pitched the plane into an angle that eventually caused the stall. But it’s possible that he didn’t understand that he was now flying in a different mode, one which would not regulate and smooth out his movements. This confusion about what how the fly-by-wire system responds in different modes is referred to, aptly, as “mode confusion,” and it has come up in other accidents.

“A lot of what’s happening is hidden from view from the pilots,” says Langewiesche. “It’s buried. When the airplane starts doing something that is unexpected and the pilot says ‘hey, what’s it doing now?’ — that’s a very very standard comment in cockpits today.'”

Langewiesche isn’t the only person to point out that ‘What’s it doing now?’ is a commonly heard question in the cockpit.

In 1997, American Airlines captain Warren Van Der Burgh said that the industry has turned pilots into “Children of the Magenta” who are too dependent on the guiding magenta-colored lines on their screens.

William Langewiesche agrees:

“We appear to be locked into a cycle in which automation begets the erosion of skills or the lack of skills in the first place and this then begets more automation.”

However potentially dangerous it may be to rely too heavily on automation, no one is advocating getting rid of it entirely. It’s agreed upon across the board that automation has made airline travel safer. The accident rate for air travel is very low: about 2.8 accidents for every one million departures. (Airbus planes, by the way, are no more or less safe than their main rival, Boeing.)

Langewiesche thinks that we are ultimately heading toward pilotless planes. And by the time that happens, the automation will be so good and so reliable that humans, with all of their fallibility, will really just be in the way.

Comments (16)

Share

This weeks’ episode brought to you by the fine people at Boeing.

The music track they used this episode was Jon Hopkins – Vessel, whoever is responsible for choosing it I’d love to thank! Here’s a Youtube link to the song: https://www.youtube.com/watch?v=uNa1AZpmndA

Love the podcast…. however this one hit me a little as I remember how heart breaking it was to read the transcripts of the pilots. The emotions I felt the captain must have felt once he realized what was happening, and also realized there was nothing to stop it has stuck with me. In the podcast and in the article above the statement “The pilots, however, never tried to recover, because they never seemed to realize they were in a stall. “… and in the podcast “but in any case they never tried to do it” (it, being to recover from the stall) is not correct. This makes the crew in the story seem incompetent. The truth is both co-pilot Robert Putain and the Captain both tried to recover from the stall. The Captain in the very end DID realize what had been happening.

http://www.popularmechanics.com/flight/a3115/what-really-happened-aboard-air-france-447-6611877/

“02:13:40 (Robert) Remonte… remonte… remonte… remonte…

Climb… climb… climb… climb…

02:13:40 (Bonin) Mais je suis à fond à cabrer depuis tout à l’heure!

But I’ve had the stick back the whole time!

At last, Bonin tells the others the crucial fact whose import he has so grievously failed to understand himself.

02:13:42 (Captain) Non, non, non… Ne remonte pas… non, non.

No, no, no… Don’t climb… no, no.

02:13:43 (Robert) Alors descends… Alors, donne-moi les commandes… À moi les commandes!

Descend, then… Give me the controls… Give me the controls!

Bonin yields the controls, and Robert finally puts the nose down. The plane begins to regain speed. But it is still descending at a precipitous angle. As they near 2000 feet, the aircraft’s sensors detect the fast-approaching surface and trigger a new alarm. There is no time left to build up speed by pushing the plane’s nose forward into a dive. At any rate, without warning his colleagues, Bonin once again takes back the controls and pulls his side stick all the way back.

02:14:23 (Robert) Putain, on va taper… C’est pas vrai!

Damn it, we’re going to crash… This can’t be happening!

02:14:25 (Bonin) Mais qu’est-ce que se passe?

But what’s happening?

02:14:27 (Captain) 10 degrès d’assiette…

Ten degrees of pitch…

Exactly 1.4 seconds later, the cockpit voice recorder stops.”

Langewiesche also says in the podcast “and so they rode this aircraft down, expressing confusion the whole time, finally expressing certainty they were gonna die.” followed by him saying the plane pancaked into the water. They didn’t have uncertainty the whole time. Infact the Captain in the end new exactly what happened. I think this changes the narrative of this event in a big way. The captain realized at the end, the co-pilot was causing the crash by accident. The co-pilot who earlier tried to push the controls forward realizes as well that his co-pilot has been doing the wrong thing and begs for controls. This is a different story then all three men having no clue. It comes down to one poor mans confusion over what mode they were in, who to the VERY end… even after the captain has told him to descend…. still pulled his stick all the way back.

Love the podcast. Just feel somehow the way this story is told gives a disservice to the men in the cockpit. I’m not blaming Bonin, but to make it seem like all three men were slaves to autopilot and didn’t know what to do without it is wholly incorrect.

Cheers.

Hi Michel

You’re right that there is some indication that the pilots may have realized what was happening at the very, very end of the ordeal. Though, no one ever says the word “stall” so it’s hard to know for sure. But in any case, by then it was far too late to execute a recovery. So I disagree that this changes the narrative in any significant way. Even if it’s true that they did realize in the last seconds what had gone wrong, there’s still the question of how they could have all been hearing the automation saying “STALL STALL” for something like 85 times in row, and never have said, “Is it possible we’re in stall?” How this could have happened is the question that the piece grapples with, and we give voice to various opinions. One is that the pilots were incompetent due to having experience stripped from them by automated systems, and one is that the automated systems are confusing in their design. Either way, this takes some of the blame off of the pilots themselves.

Thanks for your comment, and for listening thoughtfully.

-Katie

Michel Savard’s comment suggests that the podcast should be amended–it was misleading as to who understood what and who did what.

But since the subject is design, there is another issue–how much did failure to design a robust automation system contribute, by its failure to react properly to a complex situation, to the deadly outcome?

Assigning the cause of the crash to “human error” may be too simplistic and even inaccurate even if, as in this instance, there were indeed human failures involved. It’s too easy to apply that label. If we leave it at that, then “human error” may block efforts to examine and eliminate systemic causes that contributed to the crash and could have, and should be, eliminated through proper design.

So yes, the fly-by-wire system disengaged. In my humble opinion, and I know nothing about these systems but still, there could have been a parallel system that would, perhaps by voice instruction, explain what was happening and what was the needed remedy. It could have repeated that over and over. Perhaps the automation could have been improved, and/or the way instruments proactively alerted the crew to the problematic action of the plane and the needed remedy.

You described a situation in which even the most experienced person in the cockpit had only a few hours of manual flying experience in a space of six months. That’s very predictable, and should have been anticipated. The system failed to adapt and to cope with the real world complexity that the pilots (and planes) were faced with. Yes, the crew could have done better. But yes, the design failed them.

There is also the question of why the controls were independent, so that the co-pilot on the left was unaware of the actions of the co-pilot on the right. There must be a reason for doing it that way. In normal flight, perhaps it wouldn’t matter, but did that design choice contribute to the loss of life in this, and perhaps other, abnormal incidents?

Of course the automation systems have increased safety–the statistics verify that. Still, it’s reasonable to ask if the designers were overconfident and failed to provide for the possibility of various failure modes. This is sometimes called resilience engineering. It would take human “failure” as just one possible parameter that design must deal with.

Maybe, at the time that plane was built, this was the best that could be done. It would be interesting to have heard if there was any reaction to the automation failure which would make this and other opportunities for “human error” less likely to have fatal outcomes now or in the future.

–Larry

Really enjoyed listening to this episode! I have some background in aviation and I was familiar with this incident from safety training during flight training.

I agree that we should look at systemic causes but in the end it was as simple as human error that led to crash. What wasn’t really addressed during the episode was the fact that this took place at night, over a pitch black ocean, in bad weather, at 35k ft… the pilots had no visual references and I am assuming they were completely disoriented. They couldn’t “feel” the stall and they did not trust their instrument. At that point I don’t think it would have made any difference how many warnings, alarm bells, buzzers, verbal instructions they might have heard.

Spatial disorientation is one of the leading causes of aircraft accidents when visual conditions are not ideal and pilots are trained to recognize and correct it. The aircraft has backup instruments and ideally the pilots should have been able to refer to their backup instruments and bring the plane back under control. There were also breakdowns in communication and procedure that contributed to the accident. The responsibility for this rest squarely with the pilots. This is why the lack of actual stick time is significant to this case. Their manual flying skills were rusty, especially under instrument conditions. I don’t mean to demonize these pilots but its a reality that you have to accept when you step in the cockpit. The pilot is responsible for the safe operation of the aircraft and their #1 job is aircraft control. They crashed a flyable airplane. While automation is meant to make flying safer, pilots cannot become overly reliant on those systems. Some military pilots are required to routinely demonstrate the ability to manually control the aircraft.

The failure of the design in my opinion is that it was not abundantly clear that the fly by wire was disconnected. Normally the computer would not have let them exceed the flight envelop. I assume that is why the copilot held the stick fully back. When flying manually you would almost never move the controls to the limit of travel. But I can see how a pilot could do that if they expected the computer to limit the control movements for them and they were confused and maybe a little panicked already. If the pilot(s) had known that he was in manual control, training might have kicked in a lot sooner.

The Asiana flight 214 is another recent instance of a crash caused when the pilots became confused about the automation settings and were unable to manually control the aircraft. Commercial aviation needs to start doing more to address the over reliance on automation.

I have spent 15 years flying airlines on both ends of the modern automation spectrum: long haul flying with two landings per month, and commuter flying with two landings by 9 am. While the podcast was fascinating, and “Children of the Magenta” is a real issue that is a focus of modern training, I disagree that AF447 fell to that problem.

“Children of the Magenta” refers to an over reliance on automation, but more specifically a reluctance to manually override automation when it is not performing as intended. Misbehaving computers are a puzzle to be solved and pilots naturally try to solve it, allowing the plane to drift off course while they do so. There is also reasonable concern that pilots are reluctant to manually override automation because they have lost the skills to do so, having been reliant on it for so long.

Neither of these brought down AF447. The pilots didn’t fail to override automation, they had an automation failure thrust upon them coincident with an instrument failure and nasty weather. Neither would a life of less automation have developed the skills to recover from a high altitude stall, any more than a life of walking makes you an adept dancer.

I believe AF447 exposes the extreme difficulty our minds have in accepting as true an idea strongly considered impossible. For an airline pilot, stalling while in cruise flight seems pretty impossible, doubly so for a fly-by-wire airplane with envelope protection. Sorting through contradictory information while dealing with a frightening emergency only adds to the difficulty, but overall the more impossible we consider an event to be, the more difficult it is to accept that it is happening.

Consider again the actions of the First Officer. His actions are doing everything possible for a fail-safe climb (if one assumes he believed FBW was still protecting him) yet his airspeed and altitude indications were either not present or indicating a severe descent. For him the idea of stall and steep descent must have seemed impossible. He got lost in the cognitive dissonance of that situation, of one action having an opposite and impossible reaction. That is a very different thing than failing to know what a stall is or how to identify one in progress. It’s a much deeper flaw in the way people interpret the world around them.

There are ways to ameliorate this problem through training and cockpit design, but blaming the issue on pilots gone lazy with automation is not one of them.

“Contributing to these rudder pedal inputs were characteristics of the Airbus A300-600 rudder system design and elements of the American Airlines Advanced Aircraft Maneuvering Program.”

see http://www.ntsb.gov/investigations/AccidentReports/Pages/AAR0404.aspx about the AA 587 crash.

It’s not that easy…

I’ve often thought that Air Inter 148 was a particularly interesting case from a design perspective. I remember endless conversations in college about the perils of UI controls that operate differently in different modes and debates about the grouping of related controls. In this accident we saw just such a problem. A single knob would be used to dial in a number which, depending on a separate mode selector, would specify a decent rate in feet per minute OR descent angle. The number was displayed near the knob but might read -33 in the former mode or -3.3 in the latter. A person could (and likely did) easily overlook the decimal point. Another display read out the mode currently selected for the knob but it wasn’t grouped with the controls in question, making it even easier to fall into mode confusion.

Yes, there were numerous other problems on that flight, I just happen to find this particular detail interesting from a design perspective.

The controls in question were redesigned after that.

I am a commercial airline pilot who has trained on this scenario (prior to this incident) and experienced trying to orient after being “on break”. I have to say this is a pod-simplified version of the incident. First off the “sleep hangover” from a break can last several minutes. This Captain was in the highly unenviable situation of trying to assess a perplexing set of data in the face of multiple alarms and warnings, aural and visual. Additionally, there was a specific design issue that was not mentioned and was not related to the “flight control law mode”. At less than 60 knots ground speed the warnings change (the major one being the “stall warning ceases). As the speed fluctuated above and below that threshold the stall warning went away and then would resume as the nose was lowered and the aircraft accelerated above 60kts GS. The incorrect conclusion is that lowering the nose is inducing the stall.

Having performed the exact situation in the simulator (prior to this incident) it is severely taxing to one’s piloting skills with no reference to the horizon and conflicting instrument displays.

The unauthorized use of Captain Vanderberg’s video is upsetting. This was not a “magenta” issue, it was actually a regime that was previously not well understood since it was never explained, taught to pilots or even well understood by Airbus. No engineer envisioned a commercial aircraft being in this regime. To be fair, the angle-of-attack was not widely taught to civilian pilots prior to this incident.

If the design deficient pitot tubes had been changed to the newer type this incident would have never occurred because the root causal factor would not have been present. The directive to change the pitot tubes to the newer model was out but the allowable time for change had not expired so this aircraft’s pitot system had not been upgraded.

In the future, the airliner cockpit will just have a man and a dog. The man’s job is to feed the dog. The dog’s job is to bite the man if he tries to touch the controls.

cld someone help me out, anyone got the name of the backing track plays at 3:00 mins?

This story inspired me to write a poem

https://medium.com/@JennaTulls/children-of-the-magenta-53a013df7b8e#.9x2q7hue8

“We appear to be locked into a cycle in which automation begets the erosion of skills or the lack of skills in the first place and this then begets more automation.”

Thanks for this fateful journey of Air France 447.

great work.

Good podcast