Most of our current auditory technologies revolve around filtering out sounds (via earplugs and noise-cancelling headphones) or by enhancing them (through hearing aids). Newer technologies, however, are beginning to fundamentally challenge our binary conception of everyday audio experiences. The following series of forward-thinking concepts, patents and gadgets are starting to transcend “off” and “on” and enter the realm of finely-tuned augmented (auditory) reality.

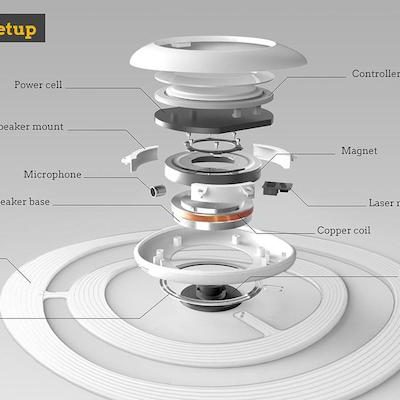

On the conceptual end of the spectrum, the Sono is a prototype device designed to cancel out everyday urban sounds. At its simplest, this smart gadget would allow users to reduce unwanted sounds, or “e-smog,” as its inventor Rudolf Stefanich calls city noise pollution.

In theory, Sono’s more advanced features are configurable. Various settings let city dwellers tune things around their taste and mood, dialing down shouting and sirens or turning up chirps of nearby birds.

A simpler variant, the Muzo, is also in the works. Its creators claim it cancels noise and can even create mobile bubbles of privacy. The device recently raised hundreds of thousands of dollars via a crowdfunding campaign, but critics have doubts about its efficacy.

While working whole room-tuning devices may still be a ways off, the recently released Here Active Listening System from Doppler Labs operates along similar lines but as a wearable. Tied to a smartphone app, this in-ear technology lets users tweak their everyday audio experience in compelling ways. Their Here One earbuds have been given out at festivals and are currently shipping to people who backed the product on Kickstarter. They will ultimately be commercially available through normal channels, priced at around $300 per pair.

The buds themselves filter inputs based on controls set on a paired mobile device. Wearers can select from presets addressing specific needs, like loud concerts or subway rides. Custom filters can also be programmed by users to address different settings. These controls can be used to limit the sounds of things like snoring or sirens or amp up the noises of nature while walking through a park.

In a sense, devices like these allow the the user to become their own DJ, shaping acoustic input at home or the office, in public spaces or private venues. Places like concert halls or outdoor festivals can also offer custom software settings that are aligned with a venue or event.

Modifying sound inputs can also go beyond filtering. Amazon recently patented a noise-canceling headphone technology that reduces or mutes audio input in response to external cues. This safety feature would turn down sound when, for instance, someone shouts the name of a wearer or when a certain noise is detected (like an alarm clock or honking car). Cutting off at the right time could save lives.

Hardware engineers and software developers are also not the only ones working on parsing sounds. Researchers at EPFL recently developed an “acoustic prism” that physically splits a sound into its constituent frequencies (along the same lines as a light prism).

Consider the potential for built environments of this kind of advancement: sounds might eventually be parsed in realtime without the help of computers or energy sources. Frequencies of unwanted sounds could separated and selectively muted by material layers. Nuanced noise control could become part of everyday architecture, something designers specify as they would sun-filtering shades.

Parsing particular sounds is essential for medical and military applications, from hearing aids to TCAPS. Increasingly, though, companies are exploring more everyday applications that will allow civilians to reshape one of our most vital and highly-used senses. This may well be the start of a “hearables” trend in augmented reality.

Leave a Comment

Share